Developed by Suzanne White Brahmia, Alexis Olsho, Trevor I. Smith, Andrew Boudreaux, Philip Eaton, and Charlotte Zimmerman

| Purpose |

To assess quantitative literacy over the span of students’ instruction in introductory calculus-based physics.

|

|---|---|

| Format | Pre/post, Multiple-choice, Multiple-response |

| Duration | 30-45 min |

| Focus | Scientific reasoning (proportional reasoning, reasoning with signed quantities, co-variational reasoning) |

| Level | Intro college |

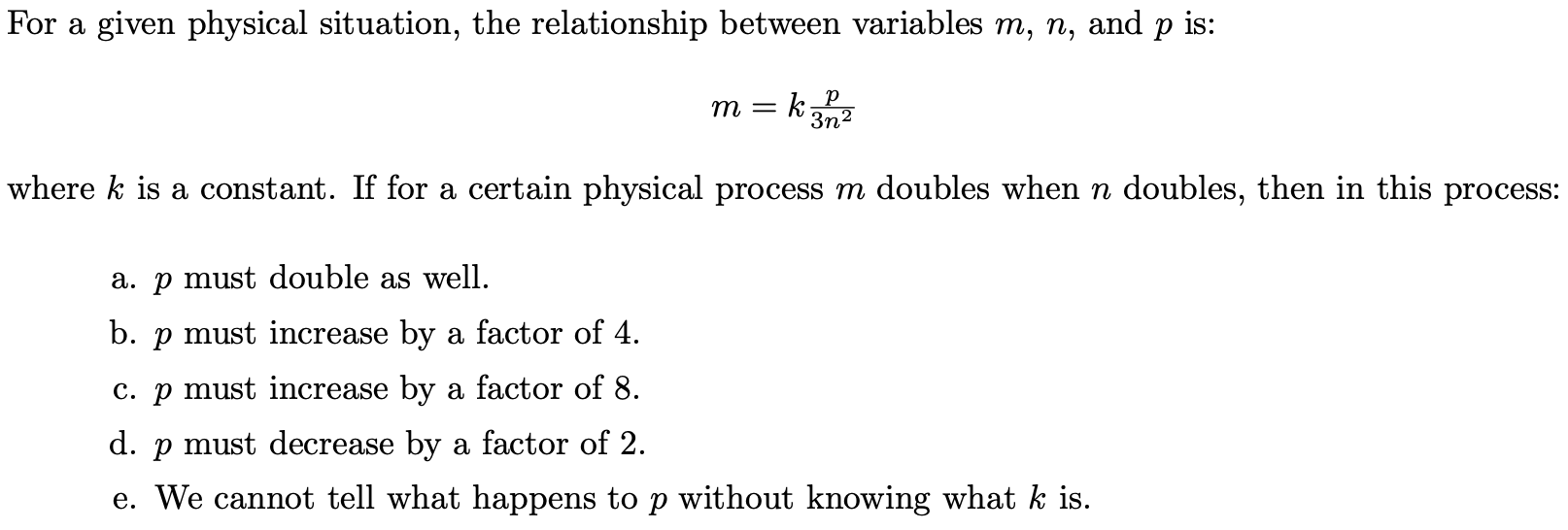

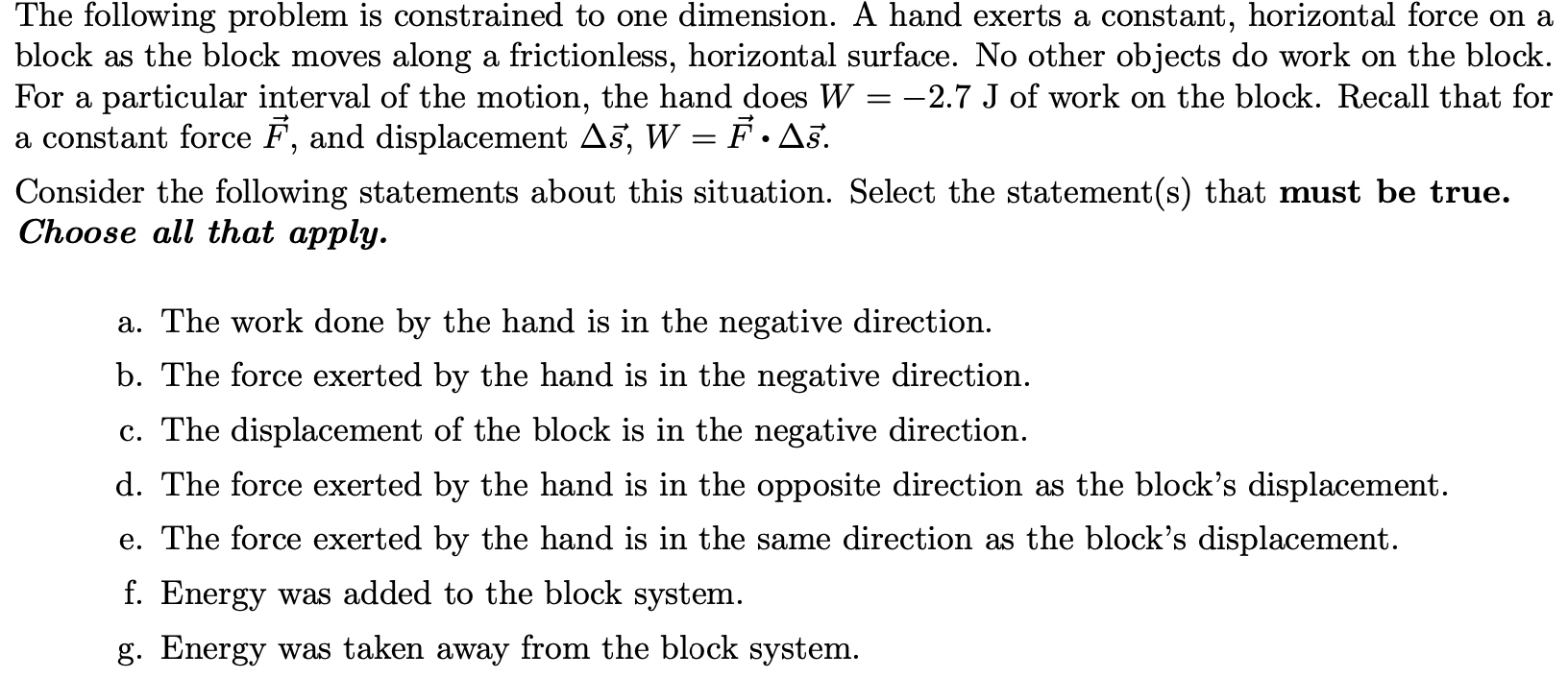

Sample questions from the PIQL:

PIQL Implementation and Troubleshooting Guide

Everything you need to know about implementing the PIQL in your class.

Login or register to download the implementation guide.

more details

This is the highest level of research validation, corresponding to all seven of the validation categories below.

Research Validation Summary

Based on Research Into:

- Student thinking

Studied Using:

- Student interviews

- Expert review

- Appropriate statistical analysis

Research Conducted:

- At multiple institutions

- By multiple research groups

- Peer-reviewed publication

The multiple-choice questions on the PIQL were developed based on a bank of questions from previous work of some of the authors, items from mathematics education research, including some from the Precalculus Concept Assessment and some questions were newly developed by the authors. The questions were reviewed by experts and tested in student interviews, and revised. The PIQL was iteratively given as a pre- and post-test to introductory physics students over 8 terms, and revised. Statistical analysis of difficulty, discrimination and reliability were performed, and reasonable results found. A confirmatory factor analysis was performed to confirm the three-factor structure of the test (ratios and proportions, covariation, and signed quantities/negativity). The developers did not find evidence for this three factor structure, and followed up with an exploratory factor analysis, where they found a unidimensional structure. The PIQL has been given to over 2000 students at two institutions and the results are published in one peer-reviewed paper.

References

- B. Boyle, T. Smith, C. Zimmerman, and S. White Brahmia, Validating Shorter Versions of the Physics Inventory of Quantitative Literacy, presented at the Physics Education Research Conference 2024, Boston, MA, 2024.

- S. Brahmia, A. Olsho, T. Smith, A. Boudreaux, P. Eaton, and C. Zimmerman, Physics Inventory of Quantitative Literacy: A tool for assessing mathematical reasoning in introductory physics, Phys. Rev. Phys. Educ. Res. 17 (2), 020129 (2021).

- A. Olsho, S. Brahmia, C. Zimmerman, T. Smith, P. Eaton, and A. Boudreaux, Online administration of a reasoning inventory in development, presented at the Physics Education Research Conference 2020, Virtual Conference, 2020.

- A. Olsho, T. Smith, P. Eaton, C. Zimmerman, A. Boudreaux, and S. Brahmia, Online test administration results in students selecting more responses to multiple-choice-multiple-response items, Phys. Rev. Phys. Educ. Res. 19 (1), 013101 (2023).

- T. Smith, P. Eaton, S. Brahmia, A. Olsho, A. Boudreaux, C. DePalma, V. LaSasso, C. Whitener, and S. Straguzzi, Using psychometric tools as a window into students’ quantitative reasoning in introductory physics, presented at the Physics Education Research Conference 2019, Provo, UT, 2019.

- T. Smith, P. Eaton, S. Brahmia, A. Olsho, C. Zimmerman, and A. Boudreaux, Toward a valid instrument for measuring physics quantitative literacy, presented at the Physics Education Research Conference 2020, Virtual Conference, 2020.

- C. Zimmerman, Characterizing and Assessing Covariational Reasoning in Introductory Physics Contexts, University of Washington, 2023.

- C. Zimmerman, A. Olsho, T. I. Smith, P. Eaton, and S. W. Brahmia, Assessing physics quantitative literacy development in algebra-based physics, Phys. Rev. Phys. Educ. Res. 21 (2) 020108 (2025).

- C. Zimmerman, A. Totah-McCarty, S. White Brahmia, A. Olsho, M. De Cock, A. Boudreaux, T. Smith, and P. Eaton, Assessing physics quantitative literacy in algebra-based physics: lessons learned, presented at the Physics Education Research Conference 2022, Grand Rapids, MI, 2022.

We don't have any translations of this assessment yet.

If you know of a translation that we don't have yet, or if you would like to translate this assessment, please contact us!

| Typical Results |

|---|

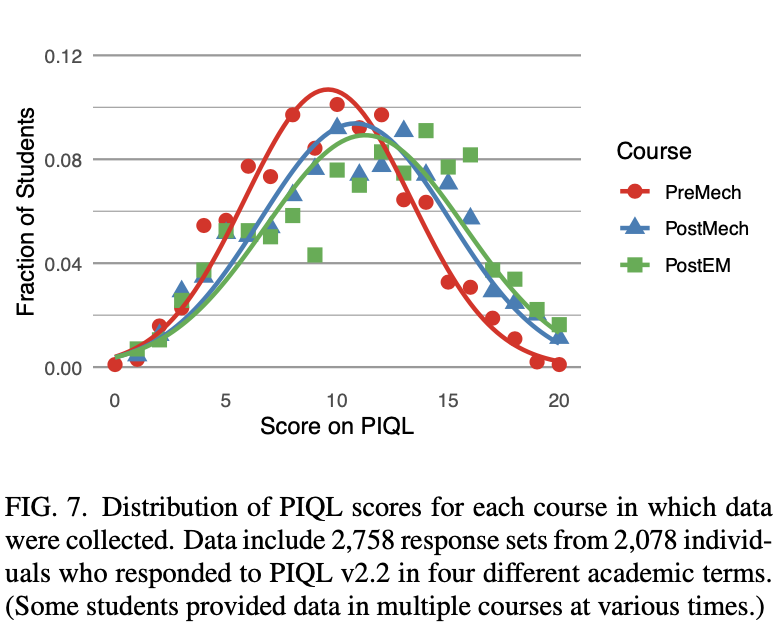

Figure 7 from Brahmia et al. 2021. Three snapshots of PIQL results in introductory course sequence: before mechanics “PreMech”, after mechanics but before electricity & magnetism “PostMech”, and after electricity & magnetism but before thermodynamics and waves “PostEM”. The data in Fig. 7 combine all four administrations of PIQL v2.2, including 2,758 data sets and 2,078 individuals. A one-way analysis of variance showed no significant difference between the four academic terms, F (3, 2754) = 1.6, p = 0.2. The PreMech mean and standard deviation are 9.6 ± 3.7 out of 20. Students’ PQL increases slightly with instruction, with mean scores 10.8 ± 4.3 for PostMech and 11.3 ± 4.5 for PostEM.

|

The most recent version of the PIQL, released in 2021, is version 2.2. The PIQL was designed to serve undergraduate calculus-based physics courses. The GERQN is more appropriate for algebra-based courses at the college/university and high-school levels.

Variation

|

|

General Equation-based Reasoning inventory of QuaNtityScientific reasoning (proportional reasoning, reasoning with signed quantities, co-variational reasoning)Intro college, High school Pre/post, Multiple-choice, Multiple-response |