Developed by The Collaboration for Astronomy Education Research (CAER)

| Purpose | To assess students’ conceptual understanding of introductory astronomy topics. |

|---|---|

| Format | Pre/post, Multiple-choice |

| Duration | Pre: 35 mins; Post: 25 min |

| Focus | Astronomy Content knowledge (apparent motion of the sun, scale of the solar system, phases of the moon, linear distance scales, seasons, global warming, nature of light, gravity, stars, cosmology) |

| Level | Intro college |

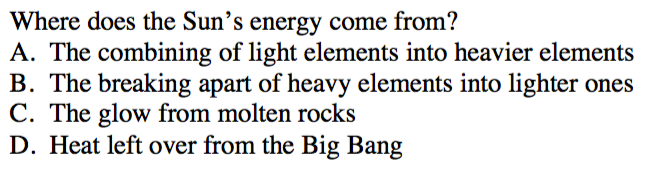

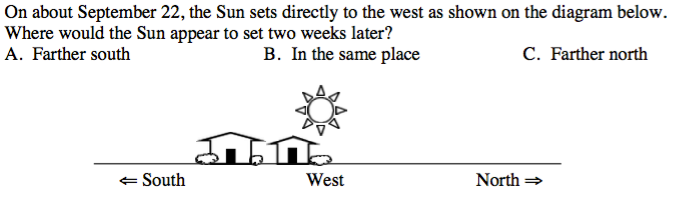

Sample questions from the ADT2:

ADT2 Implementation and Troubleshooting Guide

Everything you need to know about implementing the ADT2 in your class.

Login or register to download the implementation guide.

The ADT is included as part of more extensive strategies discussed in the following publications:

- Learner-Centered Astronomy Teaching: Strategies for ASTRO101 (Slater & Adams, Pearson Education/Prentice Hall: 2003, ISBN0-13-046630-1)

- Peer Instruction for Astronomy (Green, Pearson Education/Prentice Hall: 2003, SBN0-13-026310-9)

- Great Ideas in Teaching Astronomy (Pompea, Brooks Cole: 2000, ISBN 0-534-37301-1)

more details

This is the highest level of research validation, corresponding to all seven of the validation categories below.

Research Validation Summary

Based on Research Into:

- Student thinking

Studied Using:

- Student interviews

- Expert review

- Appropriate statistical analysis

Research Conducted:

- At multiple institutions

- By multiple research groups

- Peer-reviewed publication

The multiple-choice questions on the most recent version of the Astronomy Diagnostic Test (ADT), version 2.0, come from an earlier version of the ADT which consisted of questions from several earlier astronomy tests. The Collaboration for Astronomy Education Research (CAER) rewrote the original ADT questions in line with standard psychometric principles and added new questions, which then underwent expert review. The questions were given to students in 34 astronomy courses at several institutions, and appropriate statistical analyses of reliability, difficulty and discrimination were conducted and reasonable values found for each. Students were also given open-ended versions of the questions, and their answers compared to the multiple-choice responses. Another set of students was interviewed about their responses to the ADT questions. The ADT has been given to over 5000 students at universities, four-year colleges and two-year colleges in 31 states. A significant gender difference has been found between men and women’s ADT scores, with women scoring an average of 28% and men 38% (standard errors both less than 1%). The ADT results are published in four peer-reviewed articles.

References

- E. Brogt, D. Sabers, E. Prather, G. Deming, B. Hufnagel, and T. Slater, Analysis of the Astronomy Diagnostic Test, Astron. Educ. Rev. 6 (1), 25 (2007).

- E. Brunsell and J. Marcks, Identifying A Baseline for Teachers’ Astronomy Content Knowledge, Astron. Educ. Rev. 3 (2), 38 (2009).

- G. Deming, Results from the Astronomy Diagnostic Test National Project, Astron. Educ. Rev. 1 (1), 52 (2002).

- B. Hufnagel, Development of the Astronomy Diagnostic Test, Astron. Educ. Rev. 1 (1), 47 (2002).

PhysPort provides translations of assessments as a service to our users, but does not endorse the accuracy or validity of translations. Assessments validated for one language and culture may not be valid for other languages and cultures.

| Language | Translator(s) | |

|---|---|---|

| Spanish |  |

|

| Swedish | Frida Torrång |  |

If you know of a translation that we don't have yet, or if you would like to translate this assessment, please contact us!

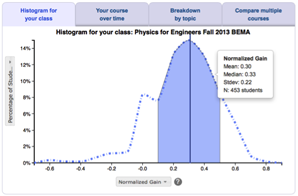

Score the ADT2 on the PhysPort Data Explorer

With one click, you get a comprehensive analysis of your results. You can:

- Examine your most recent results

- Chart your progress over time

- Breakdown any assessment by question or cluster

- Compare between courses

| Typical Results |

|---|

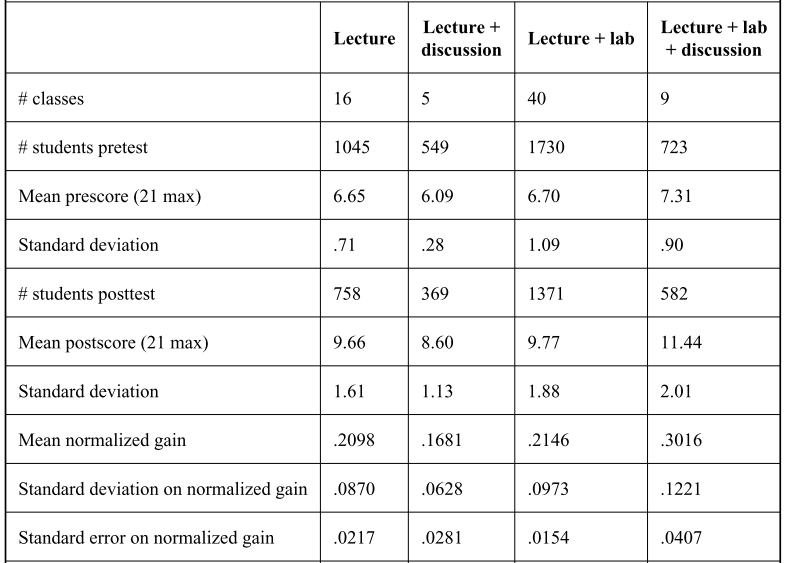

Typical results for different course formats from (Brogt et al., 2007):

|

The latest version of the ADT, released in June 1999, is version 2.0. Version 1.0, written by Michael Zeilik and released in 1998, consisted of 13 questions from his Misconceptions Measure (Zeilik et al. 1997), 10 questions from Phil Sadler’s 47-item Project STAR Astronomy Concept Inventory, and 10 new questions. Version 2.0 of the ADT was re-written by the The Collaboration for Astronomy Education Research (CAER) using standard psychometric principles, e.g., Miyasaka and Ryan (1997). These principles for multiple-choice assessments include having only one concept per question, enabling the correct answer to be known before reading the answers, and avoiding scientific jargon.