Developed by Janelle Bailey, Bruce Johnson, Edward Prather, and Timothy Slater

| Purpose | To measure student learning about the properties and formation of stars. |

|---|---|

| Format | Pre/post, Multiple-choice |

| Duration | 20-25 min |

| Focus | Astronomy Content knowledge (stellar properties, nuclear fusion, star formation) |

| Level | Intro college |

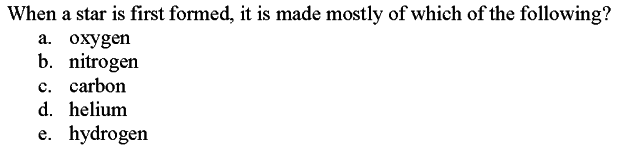

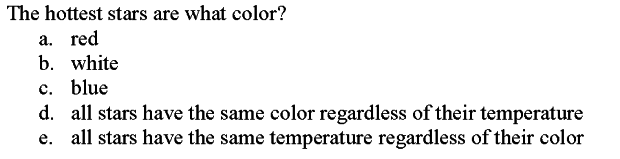

Sample questions from the SPCI:

SPCI Implementation and Troubleshooting Guide

Everything you need to know about implementing the SPCI in your class.

Login or register to download the implementation guide.

more details

This is the highest level of research validation, corresponding to all seven of the validation categories below.

Research Validation Summary

Based on Research Into:

- Student thinking

Studied Using:

- Student interviews

- Expert review

- Appropriate statistical analysis

Research Conducted:

- At multiple institutions

- By multiple research groups

- Peer-reviewed publication

The multiple-choice questions on the SPCI were developed based on exam and textbook questions and the authors experience with teaching the content. The initial set of multiple-choice answers were developed based on research on students ideas about stars collected through interviews and open-ended responses. These questions were given to over 900 students in three formats: multiple-choice, multiple-choice plus explanation and open-ended and responses compared. Additionally, students were interviewed about their responses to ensure that the questions were interpreted as intended. Questions were revised to create version 2 of the SPCI which was administered to over 500 students, after which it was revised again to create version 3. The SPCI then underwent expert review. The SPCI was given to over 400 students and appropriate analysis of reliability, difficulty and discrimination were preformed and reasonable values found and/or questions revised. Overall, the SPCI has been given to over 2000 students at two institutions and the results are published in three peer-reviewed papers.

References

- J. Bailey, Development of a concept inventory to assess students' understanding and reasoning difficulties about the properties and formation of stars, Astron. Educ. Rev. 6 (2), 133 (2007).

- J. Bailey, B. Johnson, E. Prather, and T. Slater, Development and Validation of the Star Properties Concept Inventory, Int. J. Sci. Educ. 34 (14), 2257 (2011).

PhysPort provides translations of assessments as a service to our users, but does not endorse the accuracy or validity of translations. Assessments validated for one language and culture may not be valid for other languages and cultures.

| Language | Translator(s) | |

|---|---|---|

| Japanese | Michi Ishimoto |  |

| Spanish | Agustín Vallejo |  |

If you know of a translation that we don't have yet, or if you would like to translate this assessment, please contact us!

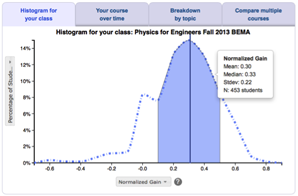

Score the SPCI on the PhysPort Data Explorer

With one click, you get a comprehensive analysis of your results. You can:

- Examine your most recent results

- Chart your progress over time

- Breakdown any assessment by question or cluster

- Compare between courses

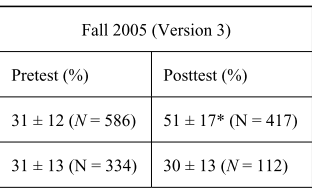

| Typical Results |

|---|

Typical results from Bailey 2007: During fall 2005, Version 3 was administered to 1,101 participants as a pretest (644 completed the posttest) in both ASTRO 101 and ES 101 classes (where ES101 courses are other introductory science courses, such as earth science or atmospheric science). After removing responses that were incomplete or careless (e.g., choosing all Bs), means were calculated for both groups for the pretest and posttest administrations (Table 1). Using the subset of ASTRO 101 responses that could be matched between pretest and posttest, an effect size (Cohen’s d; Thalheimer & Cook 2002) of 1.35 was calculated.

|

The latest version of the SPCI, released in 2013, is version 4. Versions 1-3 are described in Bailey et al. 2011.