Developed by Lin Ding

| Purpose | To measure to what extent specific implementations of the Matter & Interactions curriculum succeed in teaching students central energy concepts. |

|---|---|

| Format | Pre/post, Multiple-choice |

| Duration | 30-60 min |

| Focus | Mechanics Content knowledge (energy principle, forms of energy, work and heat, absorption/emission spectrum, specifying appropriate systems) |

| Level | Intro college |

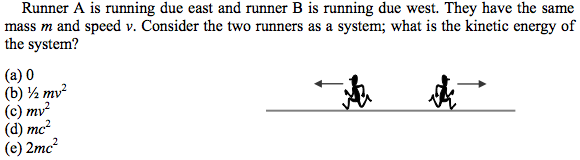

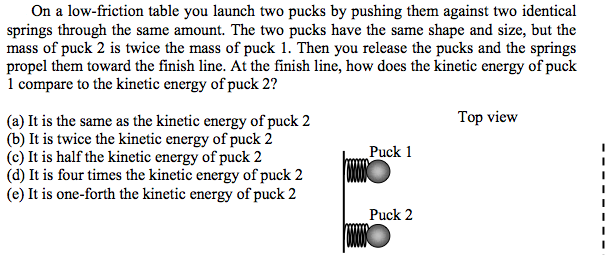

Sample questions from the ECA:

ECA Implementation and Troubleshooting Guide

Everything you need to know about implementing the ECA in your class.

Login or register to download the implementation guide.

more details

This is the highest level of research validation, corresponding to all seven of the validation categories below.

Research Validation Summary

Based on Research Into:

- Student thinking

Studied Using:

- Student interviews

- Expert review

- Appropriate statistical analysis

Research Conducted:

- At multiple institutions

- By multiple research groups

- Peer-reviewed publication

The multiple-choice questions on the ECA were developed using test objectives that described the goals of the M&I course and expert review of the test objectives. Many of the multiple-choice options come from students’ ideas. The test questions were reviewed by experts and student interviews were conducted to ensure students were interpreting the questions correctly. Appropriate statistical analyses of reliability, difficulty and discrimination were conducted and reasonable values found for each. Questions were classified according to the revised Blooms taxonomy, and most were found to require application. A factor analysis found two factors: the first groups questions about the energy principle and basic forms of energy, the second includes questions about the graphical representation of gravitational/kinetic energy of a closed system. The ECA has been used with over 300 students at several institutions. There is one peer-reviewed publication presenting ECA data.

References

- L. Ding, Designing an Energy Assessment to Evaluate Student Understanding of Energy Topics, Ph.D., North Carolina State University, 2007.

- L. Ding, R. Chabay, and B. Sherwood, How do students in an innovative principle-based mechanics course understand energy concepts?, J. Res. Sci. Teaching 50 (6), 722 (2013).

- K. Neumann, T. Viering, W. Boone, and H. Fischer, Towards a learning progression of energy, J. Res. Sci. Teaching 50 (2), 162 (2012).

PhysPort provides translations of assessments as a service to our users, but does not endorse the accuracy or validity of translations. Assessments validated for one language and culture may not be valid for other languages and cultures.

| Language | Translator(s) | |

|---|---|---|

| Croatian | Josipa Stipanov |  |

If you know of a translation that we don't have yet, or if you would like to translate this assessment, please contact us!

Login or register to download the answer key and an excel scoring and analysis tool for this assessment.

Score the ECA on the PhysPort Data Explorer

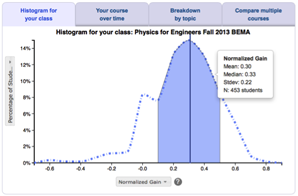

With one click, you get a comprehensive analysis of your results. You can:

- Examine your most recent results

- Chart your progress over time

- Breakdown any assessment by question or cluster

- Compare between courses

| Typical Results |

|---|

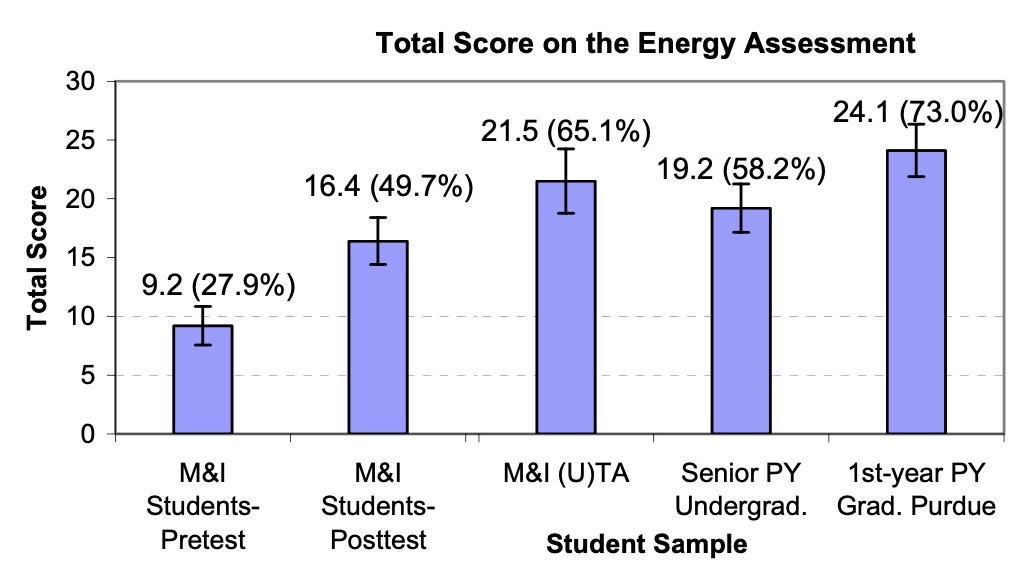

Typical scores on the ECA from Ding 2007. The ECA was developed to test the understanding of students before and after instruction in a Matter and Interactions (M&I) physics course. M&I is a course for first year physics that teaches a small number of fundamental principles and how to apply them in many different settings. Students in these courses typically perform only marginally better than random guessing on the pretest with an average score of 27.9% ± 8.3% suggesting that many of these ideas are unfamiliar to incoming students. However, students' post-test scores showed a marked improvement, with an average of 49.7% ± 12.1%. Undergraduate physics TAs, senior physics students, and physics graduate students perform better, with average scores of 61.1%, 58.2%, and 73.0%, respectively. The normalized gain for the M&I students in a first semester introductory course was 30.7% +/- 17.0% (std. dev.).

|

The latest version of the ECA, released in 2013, is version 2. Version 1 was released in 2007, along with an earlier pilot version, which according to Ding, "... is not meant to be a readily usable assessment tool."