Developed by Bob Beichner, Genaro Zavala, Santa Tejeda, and Pablo Barniol

| Purpose | To assess students’ ability to interpret kinematics graphs. |

|---|---|

| Format | Pre/post, Multiple-choice |

| Duration | 45 min |

| Focus | Mechanics Content knowledge (kinematics, graphing) |

| Level | Intro college, High school |

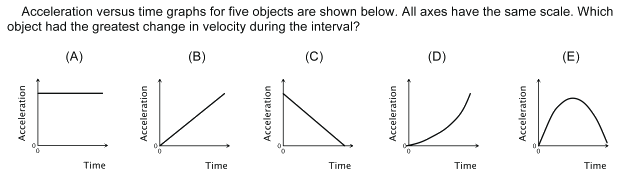

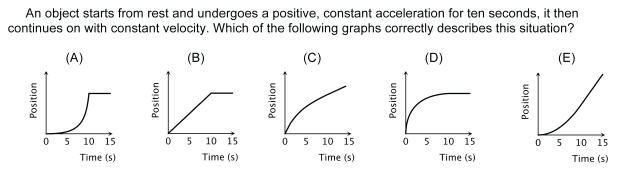

Sample questions from the TUG-K:

TUG-K Implementation and Troubleshooting Guide

Everything you need to know about implementing the TUG-K in your class.

Login or register to download the implementation guide.

more details

This is the highest level of research validation, corresponding to all seven of the validation categories below.

Research Validation Summary

Based on Research Into:

- Student thinking

Studied Using:

- Student interviews

- Expert review

- Appropriate statistical analysis

Research Conducted:

- At multiple institutions

- By multiple research groups

- Peer-reviewed publication

The multiple-choice questions on the TUG-K were developed based on seven objectives which came from banks of test questions, introductory textbooks and informal interviews with instructors. Multiple-choice options were written based on previously studied student difficulties with kinematics graphs. Questions were given to over 350 high school and college students and then revised. Appropriate statistical analyses of discrimination and reliability were performed on version 2.6. Most questions had adequate discrimination and the overall reliability of the TUG-K is good. Students in calculus-based courses did significantly better on the TUG-K than algebra-based students. Appropriate statistical analyses of version 4.0 (the latest version) were also performed, and reasonable values for difficulty, discrimination and reliability were found. The TUG-K has been given to over 1000 students in both high school and introductory college courses at many institutions. There are three peer-reviewed publications reporting TUG-K results.

References

- R. Beichner, The impact of video motion analysis on kinematics graph interpretation skills, Am. J. Phys. 64 (10), 1272 (1996).

- R. Beichner, Testing student interpretation of kinematics graphs, Am. J. Phys. 62 (8), 750 (1994).

- B. Bektasli, The relationships between spatial ability, logical thinking, mathematics performance and kinematics graph interpretation skills of 12th grade physics students, Ph.D., Ohio State University, 2006.

- N. Chanpichai and P. Wattanakasiwich, Teaching Physics with Basketball, AIP Conf. Proc. 1263 (212), (2010).

- R. Culbertson, J. Archambault, T. Burch, M. Crofton, and A. McClure, The Effects of Developing Kinematics Concepts Graphically Prior to Introducing Algebraic Problem Solving Techniques, Masters, Arizona State University, 2008.

- A. Maries and C. Singh, Exploring one aspect of pedagogical content knowledge of teaching assistants using the test of understanding graphs in kinematics, Phys. Rev. ST Phys. Educ. Res. 9 (2), 020120 (2013).

- A. Maries and C. Singh, Performance of graduate students at identifying introductory students' difficulties related to kinematics graphs, presented at the Physics Education Research Conference 2014, Minneapolis, MN, 2014.

- N. Perez-Goytia, A. Dominguez, and G. Zavala, Understanding and Interpreting Calculus Graphs: Refining an Instrument, presented at the Physics Education Research Conference 2010, Portland, Oregon, 2010.

- S. Tejeda-Torres and H. Alarcon, A tutorial-type activity to overcome learning difficulties in understanding graphics in kinematics, Lat. Am. J. Phys. Educ. 6 (Suppl. I), 285 (2012).

- W. Turner, G. Ellis, and R. Beichner, A Comparison of Student Misconceptions in Rotational and Rectilinear Motion, presented at the ASEE Annual Conference & Exposition, Indianapolis, 2014.

- C. Watson and V. Brathwaite, An Open and Interactive Multimedia e-Learning Module for Graphing Kinematics, presented at the 8th International Conference on e-Learning, Cape Town, South Africa, 2013.

- G. Zavala, S. Tejeda-Torres, P. Barniol, and R. Beichner, Modifying the test of understanding graphs in kinematics, Phys. Rev. Phys. Educ. Res. 13 (2), 020111 (2017).

PhysPort provides translations of assessments as a service to our users, but does not endorse the accuracy or validity of translations. Assessments validated for one language and culture may not be valid for other languages and cultures.

If you know of a translation that we don't have yet, or if you would like to translate this assessment, please contact us!

Login or register to download the answer key and an excel scoring and analysis tool for this assessment.

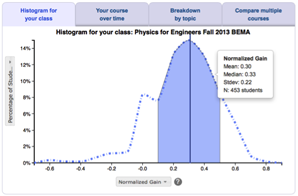

Score the TUG-K on the PhysPort Data Explorer

With one click, you get a comprehensive analysis of your results. You can:

- Examine your most recent results

- Chart your progress over time

- Breakdown any assessment by question or cluster

- Compare between courses

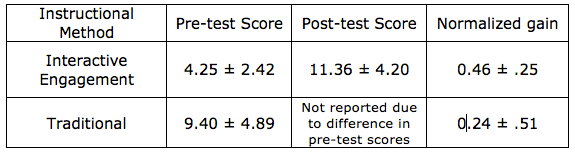

| Typical Results |

|---|

Typical results from Culbertson et al. (see Beichner, 1996 for more typical results)

|

The latest version of the TUG-K, released in 2017, is version 4.0. All validation research was done on version 2.6, which was released in 1996. The original author, Beichner, modified and expanded the assessment based on the results of this research and released versions 3.0, 3.1, and 3.2, which are nearly identical, in 2010. Zavala, Santa Tejeda, Barniol, and Beichner released version 4.0, with significant changes including "(i) the addition and removal of items to achieve parallelism in the objectives (dimensions) of the assessment, thus allowing comparisons of students’ performance that were not possible with the original version, and (ii) changes to the distractors of some of the original items that represent the most frequent alternative conceptions." Version 2.6 has 21 questions, whereas 3.0, 3.1, 3.2, and 4.0 have 26 questions. Question 10 has been changed from choosing the graph with the least area under the curve in version 2.6 to one asking how to find the object's change in acceleration in versions 3.0, 3.1, and 3.2. Question 25 has a slight wording difference between versions 3.0, 3.1, and 3.2, but they are otherwise the same. Most translations are available only for the original Version 2.6. (Spanish is available for 2.6 and 3.0.) Generally, authors update versions to clarify questions, change distracters to better reflect student thinking, and may slightly modify the content of the assessment to better reflect the most prevalent concepts as determined by faculty in the respective field. For the TUG-K, the author, Robert Beichner, says "feel free to administer any version you like."

The TUG-K2 is a variant of the TUG-K designed specifically for use in high school classrooms. The major difference is that the TUG-K2 de-emphasizes problems involving changing accelerations, which is rarely discussed in a high school setting. There are also a few completely new questions more appropriate for a high school level, such as one dealing with instantaneous velocity. Otherwise, there are a few minor differences, such as reporting numbers without scientific notation or simplifying the wording of questions (e.g., changing "What was the acceleration at t = 90 s?" to "What was the acceleration at the 90 s mark?").

Variation

|

|

Test of Understanding Graphs in Kinematics for High SchoolContent knowledge Mechanics (kinematics, graphing)High school Pre/post, Multiple-choice |