Developed by David Hestenes, Malcolm Wells, Gregg Swackhamer, Ibrahim Halloun, Richard Hake, and Eugene Mosca

| Purpose | To assess students’ understanding of the most basic concepts in Newtonian physics using everyday language and common-sense distractors. |

|---|---|

| Format | Pre/post, Multiple-choice |

| Duration | 30 min |

| Focus | Mechanics Content knowledge (forces, kinematics) |

| Level | Intro college, High school |

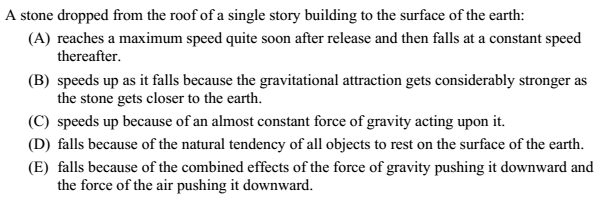

Sample question from the FCI:

FCI Implementation and Troubleshooting Guide

Everything you need to know about implementing the FCI in your class.

Login or register to download the implementation guide.

more details

This is the highest level of research validation, corresponding to all seven of the validation categories below.

Research Validation Summary

Based on Research Into:

- Student thinking

Studied Using:

- Student interviews

- Expert review

- Appropriate statistical analysis

Research Conducted:

- At multiple institutions

- By multiple research groups

- Peer-reviewed publication

About half of the questions on the FCI come from an earlier assessment called the Mechanics Diagnostic Test (MDT). Questions on the MDT were developed using students ideas from open-ended responses. These questions were then reviewed by experts, refined through student interviews and given to over 1000 students. Statistical analysis of the reliability of the MDT was conducted and the pre- and post-test were found to be highly reliable. For those FCI questions not taken directly from the MDT, open-ended responses and responses given by students in interviews were compared to ensure the questions were being interpreted correctly. Since its release, over 50 studies have been published using the FCI at both the high school and college level at over 70 institutions and including data on over 35,000 students. Most notable is the study by Hake (1998) comparing FCI scores based on instructional method for over 6500 students.

References

- R. Beichner, The Student-Centered Activities for Large Enrollment Undergraduate Programs (SCALE-UP) Project, in Research-Based Reform of University Physics, edited by E. Redish and P. Cooney, (American Association of Physics Teachers, College Park, 2007), Vol. 1.

- J. Blue and P. Heller, Using Matched Samples to Look for Sex Differences, presented at the Physics Education Research Conference 2003, Madison, WI, 2003.

- E. Brewe, V. Sawtelle, L. Kramer, G. O'Brien, I. Rodriguez, and P. Pamelá, Toward equity through participation in Modeling Instruction in introductory university physics, Phys. Rev. ST Phys. Educ. Res. 6 (1), 010106 (2010).

- M. Caballero, E. Greco, E. Murray, K. Bujak, M. Marr, R. Catrambone, M. Kohlmyer, and M. Schatz, Comparing large lecture mechanics curricula using the Force Concept Inventory: A five thousand student study, Am. J. Phys. 80 (7), 638 (2012).

- V. Coletta, J. Phillips, and J. Steinert, Interpreting force concept inventory scores: Normalized gain and SAT scores, Phys. Rev. ST Phys. Educ. Res. 3 (1), 010106 (2007).

- V. Coletta, J. Phillips, and J. Steinert, FCI normalized gain, scientific reasoning ability, thinking in physics, and gender effects, presented at the Physics Education Research Conference 2011, Omaha, Nebraska, 2011.

- C. Crouch and E. Mazur, Peer instruction: Ten years of experience and results, Am. J. Phys. 69 (9), 970 (2001).

- K. Cummings, J. Marx, R. Thornton, and D. Kuhl, Evaluating innovation in studio physics, Am. J. Phys. 67 (S1), S38 (1999).

- M. Dancy and R. Beichner, Impact of animation on assessment of conceptual understanding in physics, Phys. Rev. ST Phys. Educ. Res. 2 (1), (2006).

- D. Desbien, Modeling Discourse Management Compared to Other Classroom Management Styles in University Physics, Arizona State University, 2002.

- S. DeVore, J. Stewart, and G. Stewart, Examining the effects of testwiseness in conceptual physics evaluations, Phys. Rev. Phys. Educ. Res. 12 (2), 020138 (2016).

- R. Dietz, R. Pearson, M. Semak, and C. Willis, Gender bias in the force concept inventory?, presented at the Physics Education Research Conference 2011, Omaha, Nebraska, 2011.

- L. Ding, N. Reay, A. Lee, and L. Bao, Effects of testing conditions on conceptual survey results, Phys. Rev. ST Phys. Educ. Res. 4 (1), 010112 (2008).

- J. Docktor and K. Heller, Gender Differences in Both Force Concept Inventory and Introductory Physics Performance, presented at the Physics Education Research Conference 2008, Edmonton, Canada, 2008.

- P. Eaton, Evidence of measurement invariance across gender for the Force Concept Inventory, Phys. Rev. Phys. Educ. Res. 17 (1), 010130 (2021).

- P. Eaton, B. Frank, and S. Willoughby, Detecting the influence of item chaining on student responses to the Force Concept Inventory and the Force and Motion Conceptual Evaluation, Phys. Rev. Phys. Educ. Res. 16 (2), 020122 (2020).

- P. Eaton, K. Johnson, and S. Willoughby, Generating a growth-oriented partial credit grading model for the Force Concept Inventory, Phys. Rev. Phys. Educ. Res. 15 (2), 020151 (2019).

- P. Eaton and S. Willoughby, Confirmatory factor analysis applied to the Force Concept Inventory, Phys. Rev. Phys. Educ. Res. 14 (1), 010124 (2018).

- P. Eaton and S. Willoughby, Identifying a preinstruction to postinstruction factor model for the Force Concept Inventory within a multitrait item response theory framework, Phys. Rev. Phys. Educ. Res. 16 (1), 010106 (2020).

- K. Gray, N. Rebello, and D. Zollman, The Effect of Question Order on Responses to Multiple-choice Questions, presented at the Physics Education Research Conference 2002, Boise, Idaho, 2002.

- R. Hake, Interactive-Engagement Versus Traditional Methods: A Six-Thousand-Student Survey of Mechanics Test Data for Introductory Physics Courses, Am. J. Phys. 66 (1), 64 (1998).

- I. Halloun and D. Hestenes, Common sense concepts about motion, Am. J. Phys. 53 (11), 1056 (1985).

- I. Halloun and D. Hestenes, The initial knowledge state of the college physics students, Am. J. Phys. 53 (11), 1043 (1985).

- I. Halloun and D. Hestenes, Interpreting the force concept inventory: A response to March 1995 critique by Huffman and Heller, Phys. Teach. 33 (8), 502 (1995).

- C. Henderson, Common Concerns About the Force Concept Inventory, Phys. Teach. 40 (9), 542 (2002).

- R. Henderson and J. Stewart, Racial and ethnic bias in the Force Concept Inventory, presented at the Physics Education Research Conference 2017, Cincinnati, OH, 2017.

- R. Henderson, J. Stewart, and A. Traxler, Partitioning the gender gap in physics conceptual inventories: Force Concept Inventory, Force and Motion Conceptual Evaluation, and Conceptual Survey of Electricity and Magnetism, Phys. Rev. Phys. Educ. Res. 15 (1), 010131 (2019).

- D. Hestenes and M. Wells, A mechanics baseline test, Phys. Teach. 30 (3), 159 (1992).

- D. Hestenes, M. Wells, and G. Swackhamer, Force concept inventory, Phys. Teach. 30 (3), 141 (1992).

- D. Hewagallage, J. Stewart, and R. Henderson, Differences in the predictive power of pretest scores of students underrepresented in physics, presented at the Physics Education Research Conference 2019, Provo, UT, 2019.

- M. Hull, J. Yasuda, M. Taniguchi, and N. Mae, Towards quantification of the FCI’s validity: the effect of false positives, presented at the Physics Education Research Conference 2017, Cincinnati, OH, 2017.

- L. Kost, S. Pollock, and N. Finkelstein, Characterizing the gender gap in introductory physics, Phys. Rev. ST Phys. Educ. Res. 5 (1), 010101 (2009).

- J. Mahadeo, S. Manthey, and E. Brewe, Regression analysis exploring teacher impact on student FCI post scores, presented at the Physics Education Research Conference 2012, Philadelphia, PA, 2012.

- K. Malone, Correlations among knowledge structures, force concept inventory, and problem-solving behaviors, Phys. Rev. ST Phys. Educ. Res. 4 (2), 020107 (2008).

- A. Maries, N. Karim, and C. Singh, Is agreeing with a gender stereotype correlated with the performance of female students in introductory physics?, Phys. Rev. Phys. Educ. Res. 14 (2), 020119 (2018).

- E. Mazur, Peer Instruction: A User's Manual (Prentice Hall, Upper Saddle River, 1997).

- T. McCaskey, M. Dancy, and A. Elby, Effects on assessment caused by splits between belief and understanding, presented at the Physics Education Research Conference 2003, Madison, WI, 2003.

- L. McCullough, Gender Differences in Student Responses to Physics Conceptual Questions Based on Question Context, presented at the ASQ Advancing the STEM Agenda in Education, the Workplace and Society, University of Wisconsin-Stout, 2011.

- L. McCullough, Gender, Math, and the FCI, presented at the Physics Education Research Conference 2002, Boise, Idaho, 2002.

- L. McCullough and D. Meltzer, Differences in Male/Female Response Patterns on Alternative-format Versions of the Force Concept Inventory, presented at the Physics Education Research Conference 2001, Rochester, New York, 2001.

- M. Mears, Gender differences in the Force Concept Inventory for different educational levels in the United Kingdom, Phys. Rev. Phys. Educ. Res. 15 (2), 020135 (2019).

- G. Morris, N. Harshman, L. Branum-Martin, E. Mazur, T. Mzoughi, and S. Baker, An item-response curves analysis of the Force Concept Inventory, Am. J. Phys. 80 (9), 825 (2012).

- P. Nieminen, A. Savinainen, and J. Viiri, Force Concept Inventory-based multiple-choice test for investigating students' representational consistency, Phys. Rev. ST Phys. Educ. Res. 6 (2), 020109 (2010).

- T. O'Brien Pride, S. Vokos, and L. McDermott, The challenge of matching learning assessments to teaching goals: An example from the work-energy and impulse-momentum theorems, Am. J. Phys. 66 (2), 147 (1998).

- S. Osborn Popp and J. Jackson, Can Assessment of Student Conceptions of Force be Enhanced Through Linguistic Simplification?, presented at the American Educational Research Association 2009, San Diego, CA, 2009.

- S. Osborn Popp, D. Meltzer, and C. Megowan-Romanowicz, Is the Force Concept Inventory Biased? Investigating Differential Item Functioning on a Test of Conceptual Learning in Physics, presented at the Annual Meeting of the American Educational Research Association, New Orleans, LA, 2011.

- N. Rebello and D. Zollman, The effect of distracters on student performance on the Force Concept Inventory, Am. J. Phys. 72 (1), 116 (2004).

- A. Rudolph, B. Lamine, M. Joyce, H. Vignolles, and D. Consiglio, Introduction of interactive learning into French university physics classrooms, Phys. Rev. ST Phys. Educ. Res. 10 (1), 010103 (2014).

- J. Santana-Fajardo, Gain in learning the force concept and change in attitudes toward Physics in students of the Tonala High School, CienciaUAT 13 (1), 65 (2018).

- Y. Shoji, S. Munejiri, and E. Kaga, Validity of Force Concept Inventory evaluated by students’ explanations and confirmation using modified item response curve, Phys. Rev. Phys. Educ. Res. 17 (2), 020120 (2021).

- J. Stang, E. Altiere, J. Ives, and P. Dubois, Exploring the contributions of self-efficacy and test anxiety to gender differences in assessments, presented at the Physics Education Research Conference 2020, Virtual Conference, 2020.

- R. Steinberg and M. Sabella, Performance on multiple-choice diagnostics and complementary exam problems, Phys. Teach. 35 (3), 150 (1997).

- J. Stewart, H. Griffin, and G. Stewart, Context sensitivity in the force concept inventory, Phys. Rev. ST Phys. Educ. Res. 3 (1), 010102 (2007).

- J. Stewart, C. Zabriskie, S. DeVore, and G. Stewart, Multidimensional item response theory and the Force Concept Inventory, Phys. Rev. Phys. Educ. Res. 14 (1), 010137 (2018).

- S. Stoen, M. McDaniel, R. Frey, M. Hynes, and M. Cahill, Force Concept Inventory: More than just conceptual understanding, Phys. Rev. Phys. Educ. Res. 16 (1), 010105 (2020).

- R. Thornton, D. Kuhl, K. Cummings, and J. Marx, Comparing the force and motion conceptual evaluation and the force concept inventory, Phys. Rev. ST Phys. Educ. Res. 5 (1), 010105 (2009).

- A. Traxler, R. Henderson, J. Stewart, G. Stewart, A. Papak, and R. Lindell, Gender fairness within the Force Concept Inventory, Phys. Rev. Phys. Educ. Res. 14 (1), 010103 (2018).

- J. Von Korff, B. Archibeque, K. Gomez, T. Heckendorf, S. McKagan, E. Sayre, E. Schenk, C. Shepherd, and L. Sorell, Secondary analysis of teaching methods in introductory physics: A 50 k-student study, Am. J. Phys. 84 (12), (2016).

- J. Wells, R. Henderson, J. Stewart, G. Stewart, J. Yang, and A. Traxler, Exploring the structure of misconceptions in the Force Concept Inventory with modified module analysis, Phys. Rev. Phys. Educ. Res. 15 (2), 020122 (2019).

- C. Wheatley, J. Wells, D. Pritchard, and J. Stewart, Comparing conceptual understanding across institutions with module analysis, Phys. Rev. Phys. Educ. Res. 18 (2), 020132 (2022).

- J. Yang, J. Wells, R. Henderson, E. Christman, G. Stewart, and J. Stewart, Extending modified module analysis to include correct responses: Analysis of the Force Concept Inventory, Phys. Rev. Phys. Educ. Res. 16 (1), 010124 (2020).

- J. Yasuda, M. Hull, and N. Mae, Improving test security and efficiency of computerized adaptive testing for the Force Concept Inventory, Phys. Rev. Phys. Educ. Res. 18 (1), 010112 (2022).

- J. Yasuda, N. Mae, M. Hull, and M. Taniguchi, Analyzing false positives of four questions in the Force Concept Inventory, Phys. Rev. Phys. Educ. Res. 14 (1), 010112 (2018).

- J. Yasuda, N. Mae, M. Hull, and M. Taniguchi, Analysis to Develop Computerized Adaptive Testing with the Force Concept Inventory, J. Phys. Conf. Ser. 1929 (1), 012009 (2021).

- J. Yasuda, N. Mae, M. Hull, and M. Taniguchi, Analyzing the measurement error from false positives in the Force Concept Inventory, J. Phys. Conf. Ser. 1287 (1), 012033 (2019).

- J. Yasuda, N. Mae, M. Hull, and M. Taniguchi, Optimizing the length of computerized adaptive testing for the Force Concept Inventory, Phys. Rev. Phys. Educ. Res. 17 (1), 010115 (2021).

PhysPort provides translations of assessments as a service to our users, but does not endorse the accuracy or validity of translations. Assessments validated for one language and culture may not be valid for other languages and cultures.

If you know of a translation that we don't have yet, or if you would like to translate this assessment, please contact us!

Login or register to download the answer key and an excel scoring and analysis tool for this assessment.

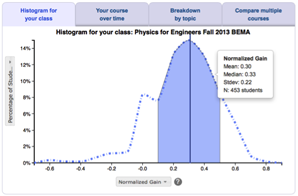

Score the FCI on the PhysPort Data Explorer

With one click, you get a comprehensive analysis of your results. You can:

- Examine your most recent results

- Chart your progress over time

- Breakdown any assessment by question or cluster

- Compare between courses

| Typical Results |

|---|

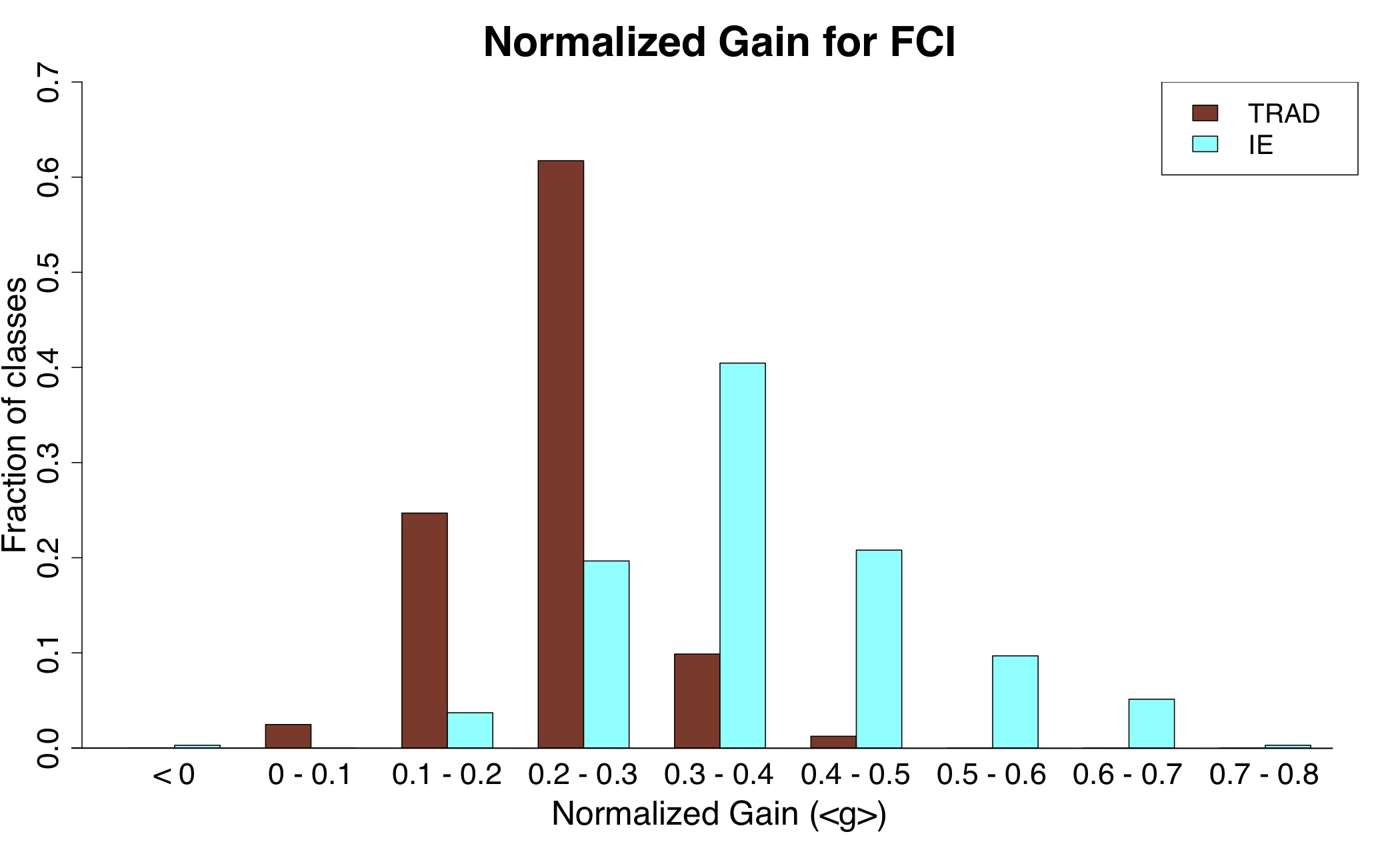

This figure from Von Korff et al. 2016 presents typical FCI normalized gains for two different teaching method types, interactive engagement and traditional lecture, for US and Canadian college students at a wide variety of institution and class types. Courses taught using interactive engagement methods have higher normalized gains than those taught using traditional lecture. These results are from a metaanalysis of FCI gains for 31,000 students in 450 classes, published in 63 papers. The FCI has also been given to tens of thousands of students in high school and outside of the US, who are not included in this study. The average normalized gain is 0.39 for interactive engagement and 0.22 for traditional lecture.

|

The latest version of the FCI, released in 1995, is called v95. This version has 30 questions and fewer ambiguities and a smaller likelihood of false positives’ than the original version (Hake 1998). The original 1992 version has 29 questions (Hestenes et al. 1992). The 1992 version was a revision of an earlier assessment called the Mechanics Diagnostic Test (MDT) (Halloun & Hestenes 1985).

There are also several variations of the FCI. All of the following variations have the same answer key as v95:

- The Gender FCI (aka Everyday FCI) uses the same questions and answer choices as the original FCI, but changes the contexts to make them more "everyday" or "feminine" (McCullough & Meltzer, 2001; McCullough, 2011).

- The Animated FCI takes the original FCI questions and animates the diagrams, so it is given on a computer. (Dancy and Beichner, 2006)

- The Familiar Context FCI was adapted from the Gender FCI by Jane Jackson.

- The Simplified FCI was adapted from the original FCI by Jane Jackson for ninth grade physics. It was written at a 7th grade reading level and includes more illustrations, but assesses the same concepts. (Osborn Popp and Jackson 2009)

Another variation is less similar to FCI:

- The Representational Variant of the FCI takes nine questions from the original FCI and redesigns them using various representations (such as motion map, vectorial and graphical), yielding 27 multiple-choice questions concerning Newton's first, second, and third laws, and gravitation. (Nieminen 2010)

Variations

|

|

Representational Variant of the Force Concept InventoryContent knowledge Mechanics (graphing, kinematics, forces, multiple representations)High school Pre/post, Multiple-choice |

|

|

Gender FCIContent knowledge Mechanics (kinematics, forces)Intro college, High school Pre/post, Multiple-choice |

|

|

Mechanics Baseline TestContent knowledge Mechanics (kinematics, forces, energy, momentum)Intro college, High school Multiple-choice |

|

|

Simplified Force Concept InventoryContent knowledge Mechanics (kinematics, forces)High school, Middle school Pre/post, Multiple-choice |

|

|

Familiar Context Force Concept InventoryContent knowledge Mechanics (kinematics, forces)Intro college, High school Pre/post, Multiple-choice |

|

|

Half-length Force Concept InventoryContent knowledge Mechanics (kinematics, forces)Intro college, High school Pre/post, Multiple-choice |