Peer Instruction

What does the research say?

This is the second highest level of research validation, corresponding to:

- at least 1 of the "based on" categories

- at least 2 of the "demonstrated to improve" categories

- at least 4 of the "studied using" categories

Research Validation Summary

Based on Research Into:

- theories of how students learn

- student ideas about specific topics

Demonstrated to Improve:

- conceptual understanding

- problem-solving skills

- lab skills

- beliefs and attitudes

- attendance

- retention of students

- success of underrepresented groups

- performance in subsequent classes

Studied using:

- cycle of research and redevelopment

- student interviews

- classroom observations

- analysis of written work

- research at multiple institutions

- research by multiple groups

- peer-reviewed publication

Research base behind the design of Peer Instruction

The development of Peer Instruction was motivated by Halloun and Hestenes 1985, who developed the Force Concept Inventory (FCI) (Hestenes, Wells, and Swackhamer 1992), a test of students’ conceptual understanding of forces. This research showed that after traditional lecture instruction, students’ understanding of the most basic concepts of forces is very poor, but that using PER-based teaching methods can significantly improve this understanding.

Many of the questions used as ConcepTests in Peer Instruction are also based on the research literature identifying specific student difficulties with physics concepts (for an overview, see McDermott and Redish 1999). ConcepTests are often designed to elicit these difficulties, with the wording of multiple-choice options based on actual student responses to open-ended questions reported in the research literature.

Research involved in the development of Peer Instruction

Mazur, the developer of Peer Instruction, read about earlier research on student difficulties, and thought it could not possibly apply to his students at Harvard. He gave the FCI in his traditional lecture class and was shocked to find that the learning gains for his students at Harvard were comparable to those for students in lecture classes at other institutions. (Mazur 1997)

Mazur tells the story of a student who asked, while he was giving the FCI, “Professor Mazur, how should I answer these questions? According to what you taught me? Or according to the way I usually think about these things?” This story inspired later research in which students were asked to answer the question according to their own understanding, and according to how a physicist would answer the question, and there were large differences between the two. (Mazur 1997)

To test how problem solving relates to conceptual understanding in a different area of physics, Mazur gave two different exam problems on electric circuits. One was a complex mathematical problem, and one was a conceptual problem that to a physicist appears much simpler. In fact, he had trouble convincing a colleague to allow him to put the conceptual problem on the exam because the colleague thought it would be too easy. He found that students performed much better on the mathematical problem than on the conceptual problem. He plotted students’ conceptual scores as a function of their conventional score, and found that while there were many students who scored well on the conceptual problem and poorly on the conventional problem, the converse was not true: there were no students who scored well on the conventional problem and poorly on the conceptual problem. This result suggests that conceptual understanding helps with problem solving, but the ability to solve traditional problems does not help with conceptual understanding. (Mazur 1997)

Research showing the effectiveness of Peer Instruction

Mazur gave the FCI in class before implementing Peer Instruction and after. Before, he got a gain of 25%, typical for a traditional lecture class, and after he got gains on the order of 50%, which is about average for PER-based teaching methods. (Mazur 1997)

One common concern about PER-based teaching methods such as Peer Instruction is that the focus on problem solving might hurt students’ ability to do traditional problem solving. To address this concern, Mazur used a final exam that was entirely focused on traditional problem solving. He gave the same exam in 1991 after implementing Peer Instruction, that he had given in 1985 when he was using traditional lecture methods. The average score in 1991 was 69%, compared to 63% in 1985, a statistically significant difference. (Mazur 1997)

Crouch and Mazur also tested problem-solving ability by giving the Mechanics Baseline Test (MBT), research-based assessment instrument that includes quantitative questions as well as conceptual questions, in classes using Peer Instruction and traditional lectures. Students in the Peer Instruction classes scored higher on the test as a whole and on the quantitative questions. (Crouch and Mazur 2001)

Crouch and Mazur tested retention of learning from ConcepTests by matching them to free-response conceptual questions based on the ConcepTests but with a new physical context on exams. Student performance on the exam questions was comparable to their performance on the original ConcepTest after discussion. (Crouch and Mazur 2001)

Research on the use of Peer Instruction in different environments

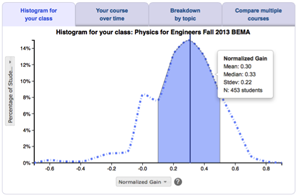

Peer Instruction is one of the most widely adopted and most commonly modified of any PER-based teaching method (Henderson and Dancy 2009). A great deal of research has been done on the implementation and adaptation of Peer Instruction in different environments. Fagen, Crouch, and Mazur 2002 surveyed 384 PI users and collected FCI scores from instructors of 30 courses at 11 colleges and universities, and found an average gain of 39%. This average gain is less than that found at Harvard, but still within the “medium-g” range typical of classes using PER-based teaching methods, and higher than that found in classes using traditional lecture methods (Hake 1998).

Turpen and Finkelstein 2009 conducted qualitative research using classroom observations and interviews to characterize the different ways that instructors implement Peer Instruction, and have found that practices vary widely and that different practices establish different classroom norms. Henderson and Dancy 2009 interviewed instructors using Peer Instruction and found that most instructors made significant modifications to the method.

One frequently asked question about Peer Instruction is which of the specific elements outlined by Mazur are critical to success, and which may be adapted without negative consequences. For example, is it necessary for students to answer each question twice, first individually and then after peer discussion?

Lasry, Charles, Whittaker, and Lautman 2009 studied the importance of peer discussion by assigning students to participate in one of three variations on Peer Instruction. Each group answered a series of questions twice. For each question, they answered it first after thinking individually, and then after some time. In between responses, one group discussed the question with peers, a second group reflected quietly, and a third group looked at an unrelated sequence of cartoons. Lasry et al. found that students who engaged in peer discussion performed significantly better on the questions the second time than the students in the other two groups. These results suggest that it is the peer discussion, rather than simply having extra time to think about the question, that leads to the increase in correct answers.

Another common concern is whether the increase in correct answers after peer discussion is due to learning through discussion, or due to the students who know the answer giving it to the students who don't. Smith, Wood, Adams, Wieman, Knight, Guild, and Su 2009 studied this concern by asking students a pair of isomorphic clicker questions. They found that after discussion of the first question, there was a significant increase in the number of students answering the second question correctly individually, suggesting that students had learned something from the peer discussion of the first question that they could apply to the second question. This was true even among students who answered the first question wrong both times.

A more controversial question is whether it is necessary for students to answer questions individually first, or if Peer Instruction works just as well if this step is skipped. See this post on the Peer Instruction blog and the comments on the post for a discussion of this question. While no studies have directly addressed the question, the results of Singh 2005 suggest that answering individually first may not be critical. Singh asked two groups of students to answer questions on the Conceptual Survey of Electricity and Magnetism (CSEM). One group answered the questions individually first, then worked in pairs and answered the same questions again (similar to Peer Instruction), and the other group answered the questions in pairs first and then individually. For the first group, as expected, their scores increased significantly after they worked in pairs. However, the second group performed just as well after working in pairs with no time to think through the questions individually first (and the extra time working on their own afterwards did not significantly change their scores). These results demonstrate that students can perform just as well on group activities if the individual answer step is skipped. However, this study did not test whether they were able to apply this learning in any other context.

Peer Instruction was originally developed for introductory physics classes, but it can also be used in upper-division classes. The University of Colorado has implemented Peer Instruction in their upper-division E&M and Quantum Mechanics courses, and found that it is effective for student learning (Chasteen and Pollock 2009), and both instructors (Pollock, Chasteen, Dubson, and Perkins 2010) and students (Perkins and Turpen 2009) value it.

References

- E. Corpuz and R. Rosalez, The Use of a Web-Based Classroom Interaction System in Introductory Physics Classes, presented at the Physics Education Research Conference 2010, Portland, Oregon, 2010.

- C. Crouch, A. Fagen, J. Callan, and E. Mazur, Classroom demonstrations: Learning tools or entertainment?, Am. J. Phys. 72 (6), 835 (2004).

- C. Crouch and E. Mazur, Peer instruction: Ten years of experience and results, Am. J. Phys. 69 (9), 970 (2001).

- C. Crouch, J. Watkins, A. Fagen, and E. Mazur, Peer Instruction: Engaging Students One-on-One, All at Once, in Research-Based Reform of University Physics, edited by E. Redish and P. Cooney, (American Association of Physics Teachers, College Park, 2007), Vol. 1.

- K. Cummings and S. Roberts, A Study of Peer Instruction Methods with High School Physics Students, presented at the Physics Education Research Conference 2008, Edmonton, Canada, 2008.

- M. Dancy, C. Turpen, and C. Henderson, Why Do Faculty Try Research Based Instructional Strategies?, presented at the Physics Education Research Conference 2010, Portland, Oregon, 2010.

- D. Demaree and S. Li, Promoting productive communities of practice: An instructor’s perspective, presented at the Physics Education Research Conference 2009, Ann Arbor, Michigan, 2009.

- A. Fagen, C. Crouch, and E. Mazur, Peer instruction: Results from a range of classrooms, Phys. Teach. 40 (4), 206 (2002).

- M. James, The effect of grading incentive on student discourse in Peer Instruction, Am. J. Phys. 74 (8), 689 (2006).

- M. James, F. Barbieri, and P. Garcia, What Are They Talking About? Lessons Learned from a Study of Peer Instruction, Astron. Educ. Rev. 7 (1), 37 (2008).

- L. Jones, A. Miller, and J. Watts, Conceptual teaching and quantitative problem solving: friends or foes?, J. Cooperat. Collab. Coll. Teach. 10 (3), 109 (2001).

- V. Kuo, P. Kohl, and L. Carr, Socratic Dialogs and Clicker use in an Upper-Division Mechanics Course, presented at the Physics Education Research Conference 2011, Omaha, Nebraska, 2011.

- N. Lasry, Clickers or Flashcards: Is There Really a Difference?, Am. J. Phys. 46 (4), 242 (2008).

- N. Lasry, E. Charles, C. Whittaker, and M. Lautman, When Talking Is Better Than Staying Quiet, presented at the Physics Education Research Conference 2009, Ann Arbor, Michigan, 2009.

- N. Lasry, E. Mazur, and J. Watkins, Peer Instruction: From Harvard to the two-year college , Am. J. Phys. 76 (11), 1066 (2008).

- J. Lenaerts, W. Wieme, and E. Van Zee, Peer instruction: A case study for an introductory magnetism course, Eur. J. Phys. 24 (1), 7 (2003).

- S. Li and D. Demaree, Promoting and Studying Deep-Level Discourse During Large-Lecture Introductory Physics, presented at the Physics Education Research Conference 2010, Portland, Oregon, 2010.

- M. Lorenzo, C. Crouch, and E. Mazur, Reducing the gender gap in the physics classroom, Am. J. Phys. 74 (2), 118 (2006).

- E. Mazur, Peer Instruction: A User's Manual (Prentice Hall, Upper Saddle River, 1997).

- S. McKagan, K. Perkins, and C. Wieman, Reforming a large lecture modern physics course for engineering majors using a PER-based design, presented at the Physics Education Research Conference 2006, Syracuse, New York, 2006.

- D. Meltzer and K. Manivannan, Transforming the lecture-hall environment: The fully interactive physics lecture, Am. J. Phys. 70 (6), 639 (2002).

- H. Nitta, Mathematical theory of peer-instruction dynamics, Phys. Rev. ST Phys. Educ. Res. 6 (2), 020105 (2010).

- K. Perez, E. Strauss, N. Downey, A. Galbraith, R. Jeanne, and S. Cooper, Does Displaying the Class Results Affect Student Discussion during Peer Instruction?, CBE Life. Sci. Educ. 9 (2), 133 (2010).

- K. Perkins and C. Turpen, Student Perspectives on Using Clickers in Upper-division Physics Courses, presented at the Physics Education Research Conference 2009, Ann Arbor, Michigan, 2009.

- S. Pollock, No Single Cause: Learning Gains, Student Attitudes, and the Impacts of Multiple Effective Reforms, presented at the Physics Education Research Conference 2004, Sacramento, California, 2004.

- S. Pollock, Transferring Transformations: Learning Gains, Student Attitudes, and the Impacts of Multiple Instructors in Large Lecture Courses, presented at the Physics Education Research Conference 2005, Salt Lake City, Utah, 2005.

- S. Pollock, S. Chasteen, M. Dubson, and K. Perkins, The use of concept tests and peer instruction in upper-division physics, presented at the Physics Education Research Conference 2010, Portland, Oregon, 2010.

- S. Pollock and N. Finkelstein, Sustaining educational reforms in introductory physics, Phys. Rev. ST Phys. Educ. Res. 4 (1), 010110 (2008).

- E. Price, C. De Leone, and N. Lasry, Comparing Educational Tools Using Activity Theory: Clickers and Flashcards, presented at the Physics Education Research Conference 2010, Portland, Oregon, 2010.

- C. Singh and G. Zhu, Improving students' understanding of quantum mechanics by using peer instruction tools, presented at the Physics Education Research Conference 2011, Omaha, Nebraska, 2011.

- M. Smith, W. Wood, W. Adams, C. Wieman, J. Knight, N. Guild, and T. Su, Why Peer Discussion Improves Student Performance on In-Class Concept Questions, Science 323 (5910), 122 (2009).

- C. Turpen, M. Dancy, and C. Henderson, Faculty Perspectives On Using Peer Instruction: A National Study, presented at the Physics Education Research Conference 2010, Portland, Oregon, 2010.

- C. Turpen and N. Finkelstein, Understanding How Physics Faculty Use Peer Instruction, presented at the Physics Education Research Conference 2007, Greensboro, NC, 2007.

- C. Turpen and N. Finkelstein, Not all interactive engagement is the same: Variations in physics professors’ implementation of Peer Instruction, Phys. Rev. ST Phys. Educ. Res. 5 (2), 020101 (2009).

- C. Turpen and N. Finkelstein, The construction of different classroom norms during Peer Instruction: Students perceive differences, Phys. Rev. ST Phys. Educ. Res. 6 (2), 020123 (2010).

- E. Watkins and M. Sabella, Examining the Effectiveness of Clickers on Promoting Learning by Tracking the Evolution of Student Responses, presented at the Physics Education Research Conference 2008, Edmonton, Canada, 2008.

- J. Watkins and E. Mazur, Just-in-Time Teaching and Peer Instruction, in Just in Time Teaching: Across the Disciplines, Across the Academy (2009), p. 39.

- G. Zhu and C. Singh, Improving students’ understanding of quantum measurement. II. Development of research-based learning tools, Phys. Rev. ST Phys. Educ. Res. 8 (1), 010118 (2012).